Artificial intelligence has crossed a remarkable threshold in medical imaging. Board-certified radiologists with over 10 years of experience achieved only 0.54 AUC when attempting to distinguish AI-generated chest X-rays from real patient scans, performing barely better than random guessing. This breakthrough, published in BJR | Artificial Intelligence (2024), signals a fundamental shift in medical image synthesis.

What technology is powering this breakthrough? Diffusion models are generative AI systems that create unlimited, anatomically accurate synthetic medical images while preserving patient privacy and maintaining clinical validity. For rare diseases affecting fewer than 200,000 people globally, where collecting sufficient imaging data has been nearly impossible, diffusion models for medical imaging represent a transformative solution.

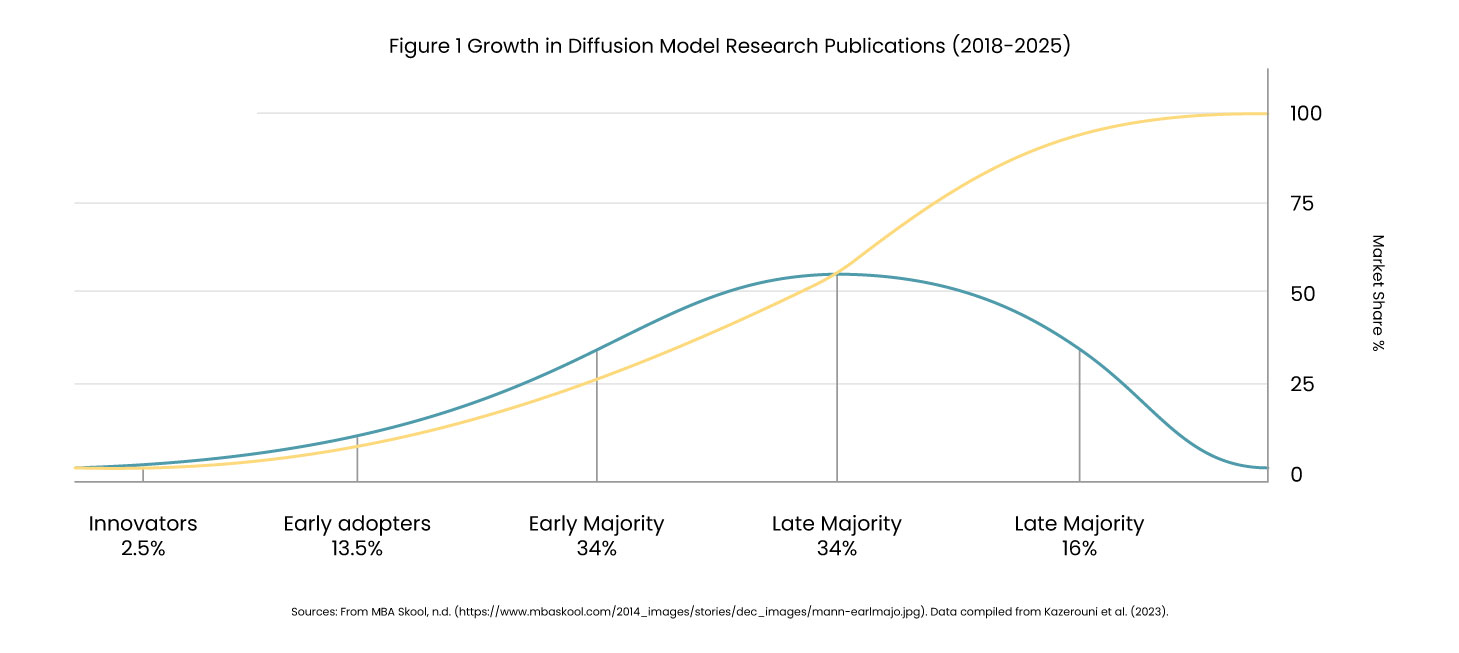

Research shows that publications on diffusion models in medical imaging surged from just 2 papers in 2018 to over 192 studies by 2025. The technology now operates across 13+ imaging modalities, with studies demonstrating 8-23% improvement in diagnostic AI accuracy when training on combined real and synthetic datasets.

The Data Scarcity Challenge in Medical AI

Medical AI development faces a fundamental barrier: rare diseases simply don’t generate enough data. A robust diagnostic AI system requires thousands of labeled images, but consider these realities :

- Amyotrophic Lateral Sclerosis (ALS): ~30,000 active US cases.

- Frontotemporal Dementia: ~60,000 US cases.

- Ultra-rare genetic variants: Sometimes <1,000 cases worldwide.

Even when patients exist, traditional data collection faces severe limitations, such as-

Financial and Regulatory Barriers:

Individual imaging studies cost $500-2,000, with comprehensive protocols reaching $5,000+. Expert radiologist annotation adds $100-300 per image. HIPAA and GDPR create bottlenecks in cross-institutional data sharing, with IRB approvals taking 6-12 months for multi-site collaborations.

Time Requirements:

Longitudinal studies tracking disease progression require 5-10 years of patient follow-up, an impossible timeline for emerging diseases or urgent clinical needs. The result? Researchers might collect 100-200 scans over a decade for a rare disease, far short of the thousands needed for robust AI medical imaging training.

How Diffusion Models Work: The Technical Foundation

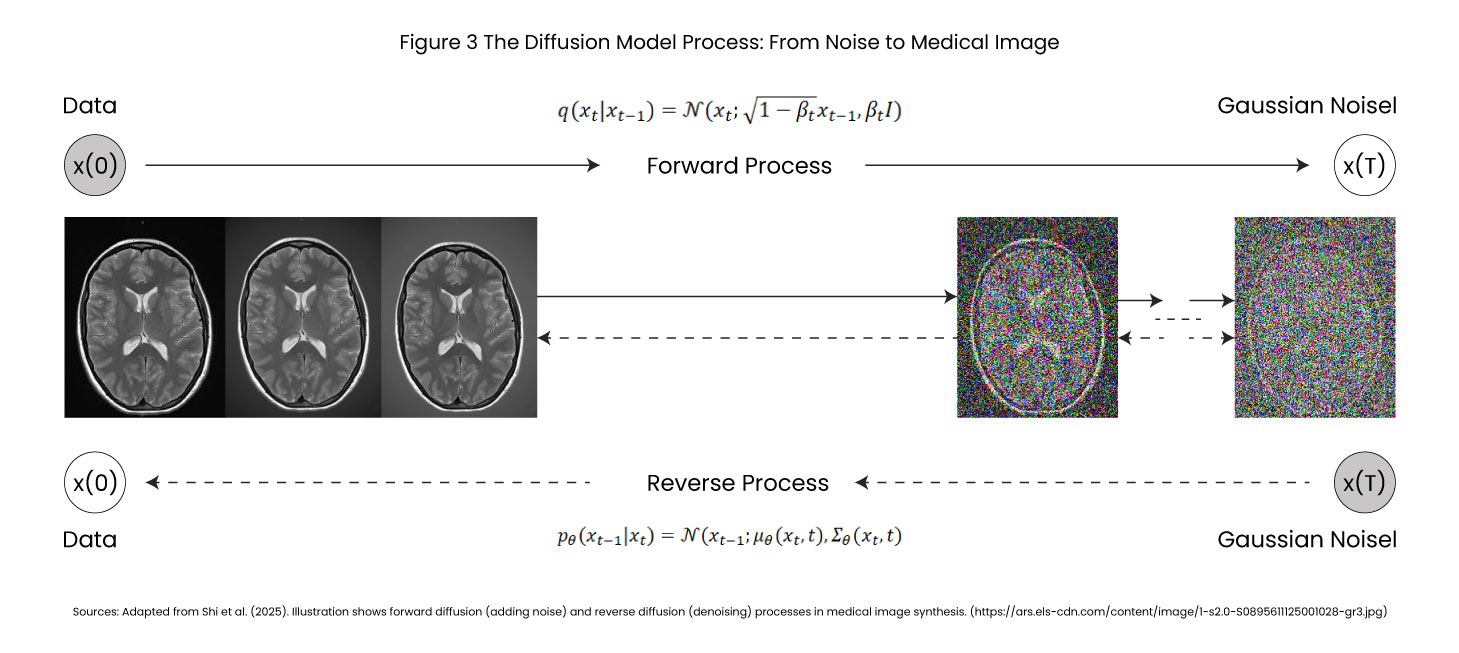

Diffusion models work through an elegant two-stage process inspired by thermodynamics, making them superior to GANs and VAEs for medical image synthesis.

Stage 1 Forward Diffusion (Learning to Destroy)

The model trains by observing thousands of real medical images, CT scans, MRIs, X-rays, and gradually transforms into random noise over typically 1,000 timesteps –

- t=0 Original chest X-ray with clear anatomy.

- t=500 Significant noise, structures becoming obscured.

- t=1000 Pure random static.

The model learns the noise patterns added at each step as a deterministic, mathematically stable process.

Stage 2 Reverse Diffusion (Learning to Create)

Once trained, the model reverses the process. Starting from pure noise, it progressively removes noise in calibrated steps, revealing anatomically accurate synthetic medical images. A U-Net architecture with attention mechanisms predicts at each step, “What noise was added?” By iteratively subtracting predicted noise, coherent medical images emerge from chaos.

Latent Diffusion Models: The Clinical Game-Changer

Operating directly on high-resolution medical images (512×512 or 1024×1024 pixels) is computationally prohibitive. Latent Diffusion Models solve this by:

- Compressing images into low-dimensional representations via Variational Autoencoders.

- Running diffusion in this compressed space (8-16× data reduction).

- Reconstructing full-resolution outputs.

Performance benefits 50% faster generation, lower GPU memory requirements, and maintained image quality, critical for clinical deployment of diffusion models in medical imaging.

Conditional Generation Precision Control

The power of diffusion models lies in controllability

- Text-to-Image: "Generate chest X-ray with bilateral pneumonia in a 65-year-old male."

- Image-to-Image: Converting CT scans → MRI, or vice versa.

- Mask-Guided: Radiologists specify exact tumor locations for synthetic medical images.

Applications of Diffusion Models in Medical Imaging

Application 1: Data Augmentation for Rare Diseases

A 2025 study in PMC demonstrated diffusion models for neuroimaging data augmentation in ALS and frontotemporal dementia.

- Dataset: 150 real brain MRI scans.

- Generation: 1,500 synthetic medical images (10× augmentation).

- Impact: Enabled diagnostic AI training previously impossible.

- Cost savings: $2M+ vs. traditional prospective collection.

- Performance gains in medical image synthesis

- 85.7% sensitivity in liver lesion detection (vs. 78.6% real-only).

- 23% improvement in certain diagnostic tasks.

- 0.941 PPV in mitotic phase classification (vs. 0.718 baseline).

These metrics demonstrate how AI medical imaging systems benefit dramatically from synthetic medical images.

Application 2: Privacy-Preserving Multi-Institutional Collaboration

During COVID-19, 127 hospitals participated in AI development via synthetic medical images.

- Each hospital trained diffusion models on local patient data.

- Generated synthetic COVID-19 chest X-ray datasets.

- Shared freely without HIPAA/GDPR violations.

- Collaborative model developed in 3 months vs. 12-18 months with traditional data sharing.

This showcases GEN AI in healthcare, enabling research at unprecedented speed while maintaining regulatory compliance.

Application 3: FDA Regulatory Validation

FDA medical device submissions require testing across diverse populations and rare presentations.

- Edge case generation for creating test examples that a natural collection would miss.

- Demographic diversity that balances datasets across age, sex, and ethnicity to detect algorithmic bias.

- Rapid iteration for validating model updates without waiting years for new data collection.

Current FDA stance (2026): Synthetic medical images accepted as training augmentation, pure synthetic validation not yet approved, but under active evaluation for medical image synthesis applications.

Application 4: Medical Education and Training

Diffusion models in medical imaging transform medical education :

- Rare pathology exposure states that students study diseases they may never see in practice.

- Unlimited practice for radiologists-in-training provides access to infinite diagnostic exercises.

- No privacy concerns maintained, training materials shared without patient consent issues.

Real-World Implementations and Industry Adoption

Academic Breakthroughs in Diffusion Models Medical Imaging :

A) Sizikova et al. (2024) – BJR | Artificial Intelligence

Radiologists 0.54 AUC identifying synthetic vs. real chest X-rays.

Significance: Crossed perceptual threshold for clinical realism in medical image synthesis.

B) Kazerouni et al. (2023) – Medical Image Analysis

A comprehensive survey of 192 papers analyzed on diffusion models in medical imaging.

Conclusion: Diffusion models are becoming the dominant generative AI healthcare approach.

C) UniMIE Study (2025) – Communications Medicine

Training-free diffusion model for medical image enhancement.

Performance across 13+ modalities simultaneously CT, MRI, X-ray, ultrasound, and PET.

- NVIDIA MONAI Production-ready diffusion model implementations, GPU optimization for clinical workflows.

- Google Health Research on chest X-rays, retinal imaging synthesis.

- Startups Synthetic medical data-as-a-service for pharma/biotech AI development.

Technical Capabilities and Current Limitations

Strengths of Diffusion Models in Medical Imaging

- Anatomical Fidelity: maintains proper organ structures and spatial relationships in synthetic medical images.

- Controllability: for text-guided medical image synthesis, conditional generation.

- Scalability: Unlimited generation once trained, critical for rare disease data augmentation.

- Privacy: Zero patient-identifiable information in synthetic medical images.

Limitations and Challenges

- Domain Gap: Subtle statistical differences from real data, AI medical imaging models trained purely on synthetic images underperform 5-15%.

- Rare Pathology Representation:Can only generate variations of known patterns in medical image synthesis.

- Computational Costs: Training diffusion models requires high-end GPUs and weeks of computation.

- Validation Required: Expert radiologist review is still needed for quality assurance.

- Misuse Potential: Could generate fraudulent medical records or fake diagnostic images

Ethical Considerations in Generative AI Healthcare

- Patient Consent: Were the original patients informed that their images would train generative AI models for medical image synthesis?

- Algorithmic Bias: If training data underrepresents certain demographics, synthetic medical images perpetuate bias. Solution: Intentional diversity in generation, comprehensive bias audits for AI medical imaging systems.

- Clinical Validity: Who bears responsibility if AI trained on synthetic medical images makes diagnostic errors?

Governance Best Practices for Diffusion Models Medical Imaging

Technical Safeguards

- Digital watermarking for synthetic medical images' provenance tracking.

- Access controls prevent unauthorized generation.

- Audit trails documenting all synthetic data creation.

Institutional Oversight

- Ethics board review for medical image synthesis projects.

- Mandatory radiologist validation before research use.

- Continuous monitoring of deployed AI medical imaging systems.

Regulatory Evolution

- The FDA/EMA is developing standards for synthetic medical images.

- Potential certification for diffusion model platforms by 2027-2028.

- International harmonization of validation protocols for generative AI healthcare.

The Future of Synthetic Medical Data

Near-Term Developments (1-2 Years)

- Real-time clinical integration with hospital PACS systems.

- Multi-modal medical image synthesis (CT ↔ MRI ↔ PET translation).

- Personalized digital twins for treatment planning using diffusion models.

Medium-Term Innovations (3-5 Years)

- FDA acceptance of synthetic-primary training datasets for AI medical imaging.

- Global federated synthetic medical data networks.

- Hybrid human-AI diagnostic workflows leveraging diffusion models for medical imaging.

Long-Term Vision (5-10 Years)

- Every rare disease has sufficient training data via medical image synthesis.

- Synthetic-first clinical trial design using GEN AI.

- Democratization: Small hospitals access world-class synthetic medical image datasets.

Conclusion: Transforming Medical AI with Diffusion Models

Diffusion models represent more than technical innovation in medical imaging; they represent a paradigm shift. Medical research has historically been constrained by data availability. Rare diseases went unstudied. Promising AI approaches were abandoned. Clinical trials struggled with diversity.

Synthetic medical images generation inverts this constraint. The bottleneck shifts from slow, expensive data collection to fast, scalable medical image synthesis. AI medical imaging is no longer limited by how much data we can collect, but by how intelligently we can generate it.

Key Takeaways

- Radiologist-indistinguishable synthetic medical images were achieved (0.54 AUC detection).

- 8-23% diagnostic accuracy improvements demonstrated in AI medical imaging.

- $2M+ cost savings for rare disease research via diffusion models.

- Privacy-preserving multi-institutional collaboration enabled through synthetic medical images.

- Regulatory frameworks are evolving toward the acceptance of medical image synthesis.

This doesn’t diminish real-world evidence; it remains the gold standard for validation. But synthetic medical images augment and extend real data, enabling research at previously impossible scales.

Sources and References

1. Sizikova, E., et al. (2024). “Synthetic data in radiological imaging: current state and future outlook.” BJR|Artificial Intelligence, Volume 1, Issue 1.

2. Kazerouni, A., et al. (2023). “Diffusion models in medical imaging: A comprehensive survey.” Medical Image Analysis, May 2023.

3. Fei, B., et al. (2025). “UniMIE: A diffusion model for universal medical image enhancement.” Communications Medicine, Volume 5.

Insights That Drive Impact

Healthcare is evolving faster than ever — and those who adapt are the ones who will lead the change.

Stay ahead of the curve with our in-depth insights, expert perspectives, and a strategic lens on what’s next for the industry.